Sound Event Localization & Detection

Research Visualizations

Overview

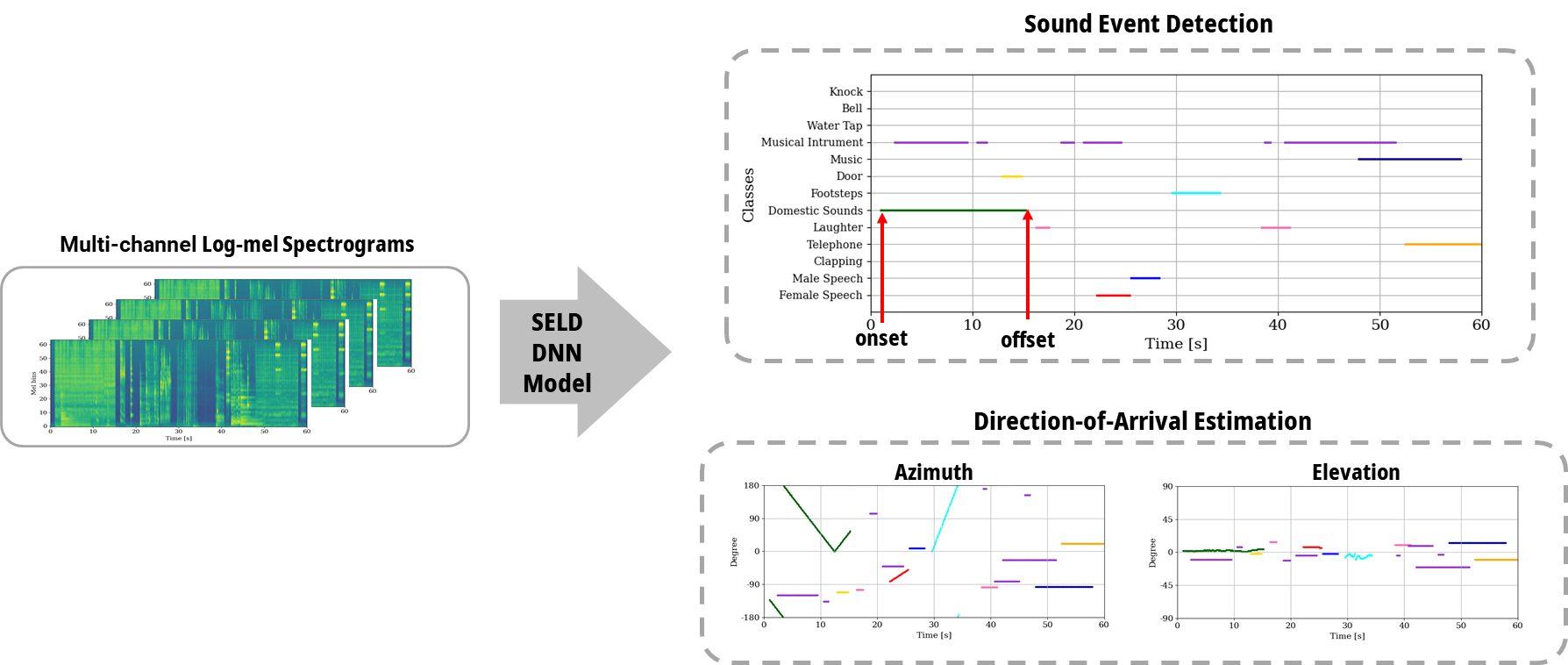

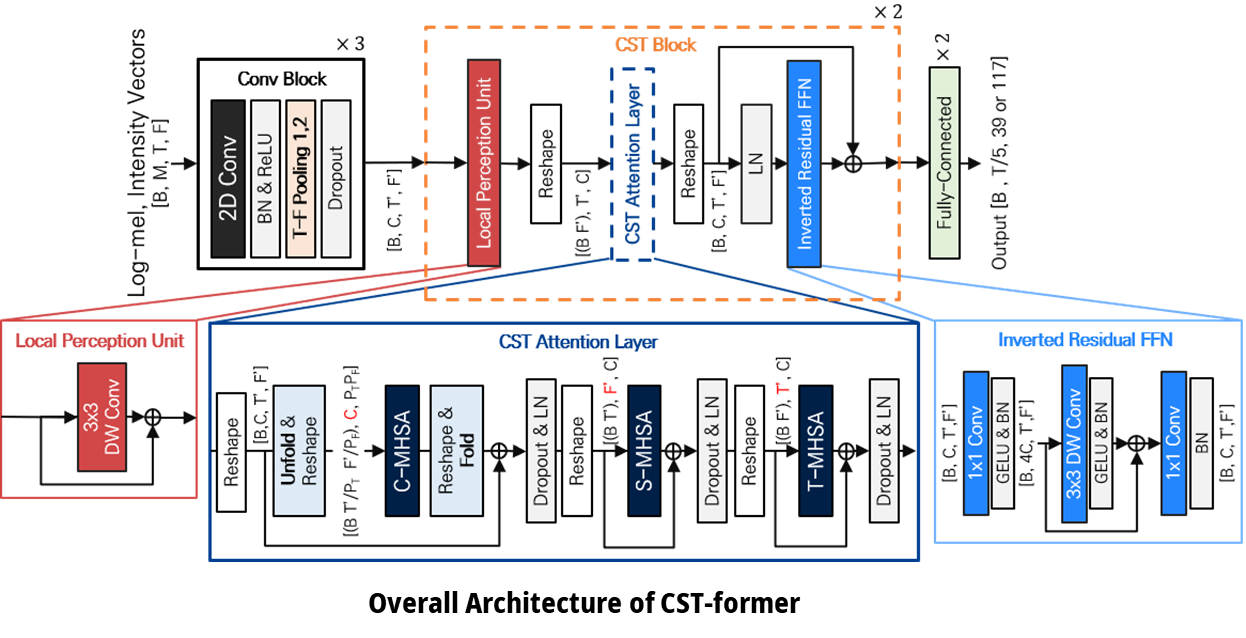

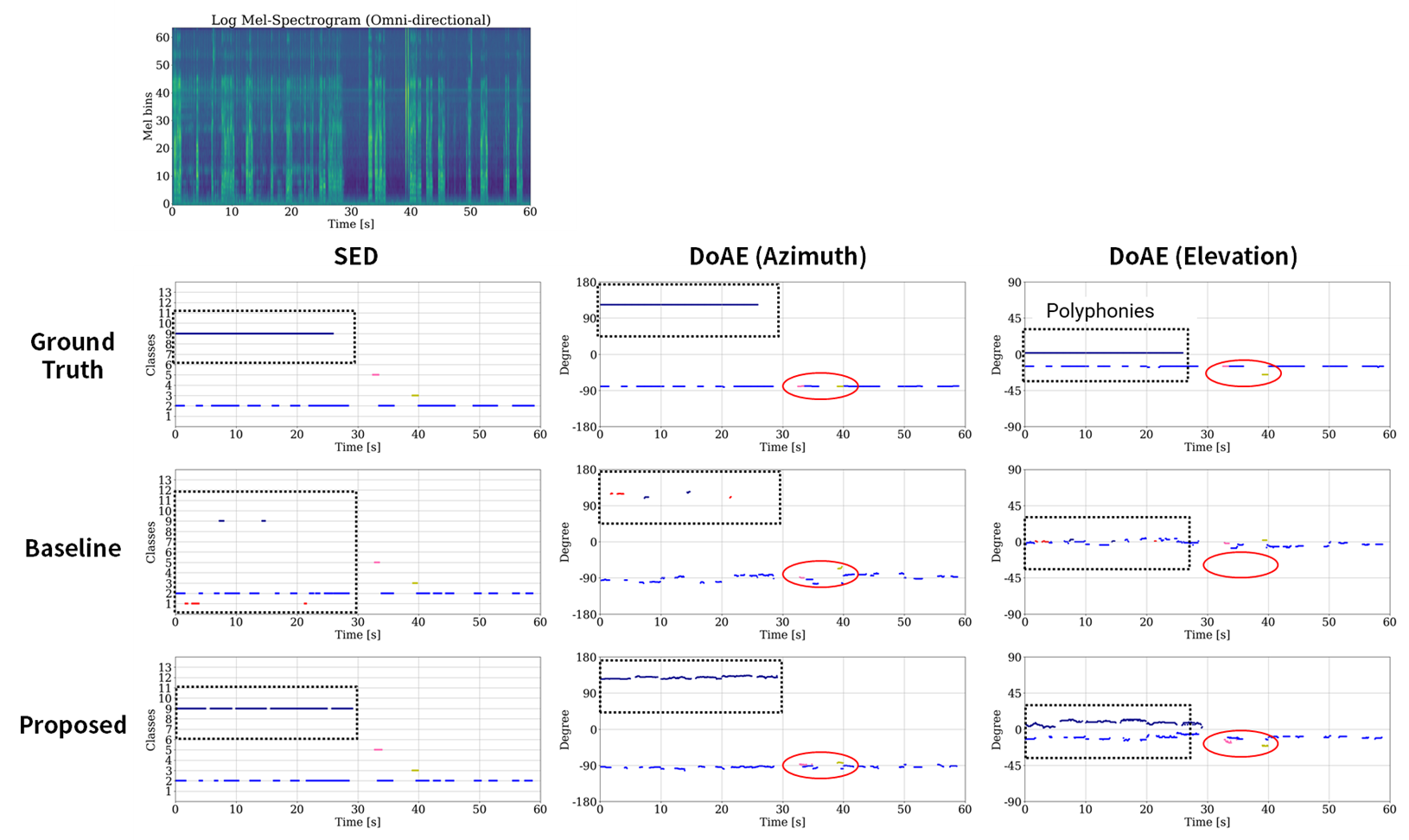

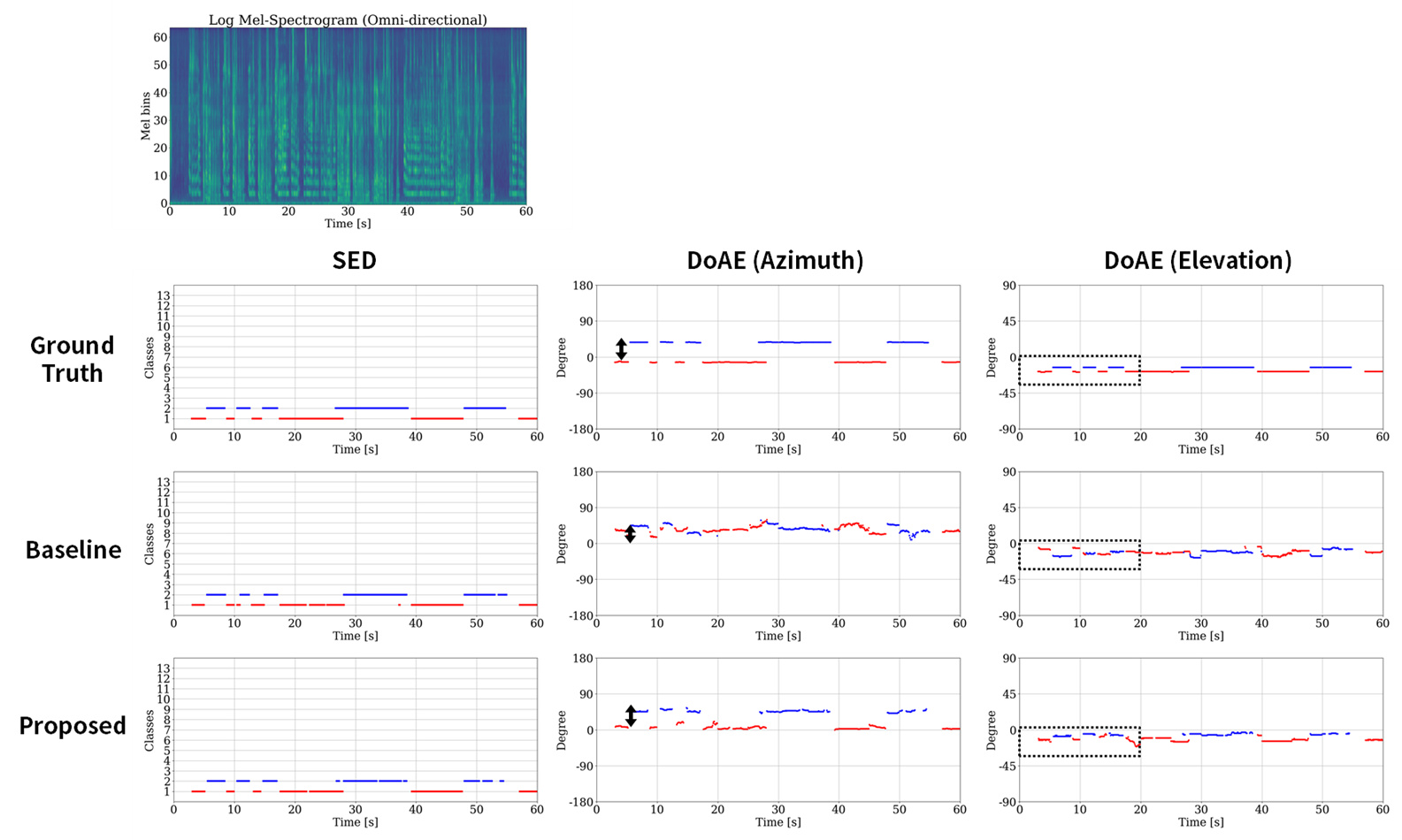

Sound event localization and detection (SELD) is a task classifying sound events and localizing the direction-of-arrival (DoA) utilizing multi-channel acoustic signals. Sound event detection is to detect the onset and offset of audio of each class, therefore, making spectral and temporal information important. Localization of DoA is to specify the azimuth and elevation of the DoA of the audio source in the temporal domain, which requires additional spatial information with microphone multi-channel information. Although the spatial, spectral, and temporal information is crucial for the SELD task, prior studies employ spectral and channel information as the embedding for temporal attention learning temporal context. This usage limits the deep neural network from extracting meaningful features from the spectral or spatial domains. Therefore, we propose a novel framework termed the Channel-Spectro-Temporal Transformer (CST-former) that bolsters SELD performance by independently applying attention mechanisms to distinct domains, enabling deep neural network (DNN) to learn contexts of space, frequency, and time.

Focus Areas

- Sound event localization and detection using deep learning.

Related Publications

- DeepASA: An Object-Oriented One-for-All Network for Auditory Scene AnalysisD. Lee, Y. Kwon, and J-W. Choi•Conference on Neural Information Processing Systems (NeurIPS), San Deigo, USA•2025

- Self-Guided Target Sound Extraction and Classification through Universal Sound Separation Model and Multiple CluesY. Kwon, D. Lee, D. Kim, and J-W. Choi•DCASE workshop, Barcelona, Spain•2025

- CST-FORMER: Transformer with Channel-Spectro-Temporal Attention for Sound Event Localization and DetectionY. Shul and J-W. Choi•IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), Seoul, Korea•2024